The new GA MySQL Cluster 7.2 Release (7.2.4) just announced by Oracle includes 2 new features which when combined can improve the performance of joins by a factor of 70x (or even higher). The first enhancement is that MySQL Cluster now provides the MySQL Server with better information on the available indexes which allows the MySQL optimizer to automatically produce better query execution plans. Previously it was up to the user to manually provide hints to the optimizer. The second new feature is Adaptive Query Localization which allows the work of the join to be distributed across the data nodes (local to the data it’s working with) rather than up in the MySQL Server; this allows more computing power to be applied to calculating the join as well as dramatically reducing the number of messages being passed around the system. The combined result is that your joins can now run MUCH faster and this post describes a test that results in a 70x speed-up for a real-world query.

The Query

The join used in this test is based on a real-world example used for an on-line store/Content Management System. The original query identified all of the media in the system which was appropriate to a particular device and for which a user is entitled to access. As this query is part of a customer’s application I’ve replaced all of the table and column names.

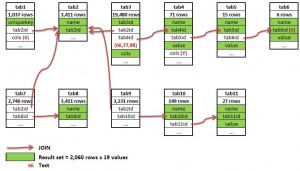

The join runs across 11 tables (which contain 33.5K rows in total) and produces a result set of 2,060 rows, each with 19 columns. The figure to the right illustrates the join and the full join is included below.

SELECT

tab1.uniquekey,

tab8.name,

tab8.tab8id,

tab11.name,

tab11.tab11id,

tab11.value,

tab10.tab10id,

tab10.name,

tab2.name,

tab2.tab2id,

tab4.value + tab5.value + tab6.value,

tab3.colx,

tab3.tab3id,

tab4.tab4id,

tab4.name,

tab5.tab5id,

tab5.name,

tab6.tab6id,

tab6.name

FROM

tab1,tab2,tab3,tab4,tab5,tab6,tab7,tab8,tab9,tab10,tab11

WHERE

tab7.tab2id = tab2.tab2id AND

tab7.tab8id = tab8.tab8id AND

tab9.tab2id = tab2.tab2id AND

tab9.tab10id = tab10.tab10id AND

tab10.tab11id = tab11.tab11id AND

tab3.tab2id = tab2.tab2id AND

tab3.tab4id = tab4.tab4id AND

tab4.tab5id = tab5.tab5id AND

tab4.colz = 'Y' AND

tab5.tab6id = tab6.tab6id AND

tab6.tab6id IN (6) AND

(tab3.tab4id IN (66, 77, 88)) AND

tab1.tab2id = tab2.tab2id AND

tab1.colx = 6;

Enabling AQL

First of all, make sure that you’re using the GA version of MySQL Cluster (7.2.4 or later); the Open Source version is available from http://dev.mysql.com/downloads/cluster/#downloads

and the commercial version from the Oracle Software Delivery Cloud. You can double check that AQL is enabled:

mysql> show variables like 'ndb_join_pushdown'; | ndb_join_pushdown | ON |

Running the Query & Results

To get the full benefit from AQL, you should run “ANALYZE TABLE;” once for each of the tables (no need to repeat for every query and it only needs running on one MySQL Server in the Cluster). This is very important and you should start doing this as a matter of course when you create or modify a table.

For this test, 3 machines were used:

- Intel Core 2 Quad Core @2.83 GHz; 8 Gbytes RAM; single, multi-threaded data node (ndbmtd)

- Intel Core 2 Quad Core @2.83 GHz; 8 Gbytes RAM; single, multi-threaded data node (ndbmtd)

- 4 Core Fedora VM running on VirtualBox on Windows 7, single MySQL Server

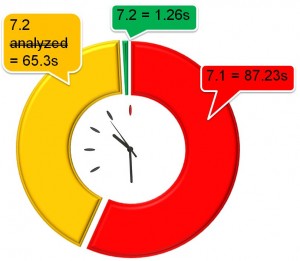

The query was then run and compared to MySQL CLuster 7.1.15a:

| MySQL Cluster 7.1.15a | 1 minute 27.23 secs | |

| MySQL Cluster 7.2.1 (without having run ANALYZE TABLE) | 1 minute 5.3 secs | 1.33x Cluster 7.1 |

| MySQL Cluster 7.2.1 (having run ANALYZE TABLE) | 1.26 secs | 69.23x Cluster 7.1 |

How it Works

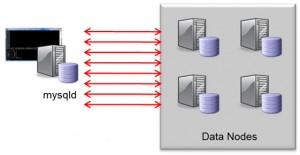

Traditionally, joins have been implemented in the MySQL Server where the query was executed. This is implemented as a nested-loop join; for every row from the first part of the join, a request has to be sent to the data nodes in order to fetch the data for the next level of the join and for every row in that level…. This method can result in a lot of network messages which slows down the query (as well as wasting resources). When turned on, Adaptive Query Localization results in the hard work being pushed down to the data nodes where the data is locally accessible. As a bonus, the work is divided amongst the pool of data nodes and so you get parallel execution.

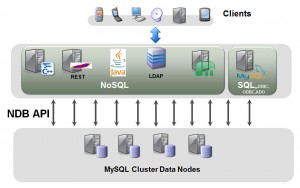

I’ll leave the real deep and dirty details to others but cover the basic concepts here. All API nodes access the data nodes using the native C++ NDB API, the MySQL Server is one example of an API node (the new Memcached Cluster API is another). This API has been expanded to allow parameterised or linked queries where the input from one query is dependent on the previous one. To borrow an example from an excellent post by Frazer Clement on the topic, the classic way to implement a join would be…

SQL > select t1.b, t2.c from t1,t2 where t1.pk=22 and t1.b=t2.pk; ndbapi > read column b from t1 where pk = 22;

[round trip]

(b = 15) ndbapi > read column c from t2 where pk = 15;

[round trip]

(c = 30)

[ return b = 15, c = 30 ]

Using the new functionality this can be performed with a single network round trip where the second read operation is dependent on the results of the first…

ndbapi > read column @b:=b from t1 where pk = 22; read column c from t2 where pk=@b;

[round trip]

(b = 15, c = 30)

[ return b = 15, c = 30 ]

You can check whether your query is fitting these rules using EXPLAIN, for example:

mysql> set ndb_join_pushdown=on;

mysql> EXPLAIN SELECT COUNT(*) FROM residents,postcodes WHERE residents.postcode=postcodes.postcode AND postcodes.town="MAIDENHEAD"; +----+-------------+-----------+--------+---------------+---------+---------+------------------------------+--------+--------------------------------------------------------------------------+ | id | select_type | table | type | possible_keys | key | key_len | ref | rows | Extra | +----+-------------+-----------+--------+---------------+---------+---------+------------------------------+--------+--------------------------------------------------------------------------+ | 1 | SIMPLE | residents | ALL | NULL | NULL | NULL | NULL | 100000 | Parent of 2 pushed join@1 | | 1 | SIMPLE | postcodes | eq_ref | PRIMARY | PRIMARY | 22 | clusterdb.residents.postcode | 1 | Child of 'residents' in pushed join@1; Using where with pushed condition | +----+-------------+-----------+--------+---------------+---------+---------+------------------------------+--------+--------------------------------------------------------------------------+

mysql> EXPLAIN EXTENDED SELECT COUNT(*) FROM residents,postcodes,towns

WHERE residents.postcode=postcodes.postcode AND

postcodes.town=towns.town AND towns.county="Berkshire";

+----+-------------+-----------+--------+---------------+---------+---------+------------------------------+--------+----------+------------------------------------------------------------------------------------------------------------------------+

| id | select_type | table | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+-----------+--------+---------------+---------+---------+------------------------------+--------+----------+------------------------------------------------------------------------------------------------------------------------+

| 1 | SIMPLE | residents | ALL | NULL | NULL | NULL | NULL | 100000 | 100.00 | Parent of 3 pushed join@1 |

| 1 | SIMPLE | postcodes | eq_ref | PRIMARY | PRIMARY | 22 | clusterdb.residents.postcode | 1 | 100.00 | Child of 'residents' in pushed join@1 |

| 1 | SIMPLE | towns | eq_ref | PRIMARY | PRIMARY | 22 | clusterdb.postcodes.town | 1 | 100.00 | Child of 'postcodes' in pushed join@1; Using where with pushed condition: (`clusterdb`.`towns`.`county` = 'Berkshire') |

+----+-------------+-----------+--------+---------------+---------+---------+------------------------------+--------+----------+------------------------------------------------------------------------------------------------------------------------+

Note that if you want to check for more details why your join isn’t currently being pushed down to the data node then you can use “EXPLAIN EXTENDED” and then “SHOW WARNINGS” to get more hints. Hopefully that will allow you to tweak your queries to get the best improvements.

PLEASE let us know your experiences and give us examples of queries that worked well and (just as importantly) those that didn’t so that we can improve the feature – just leave a comment on this Blog with your table schemas, your query and your before/after timings.

The explain output in this blog post is not very readable.

It looks like a really nice feature!

Impressive results, but it seems dodgy that your MySQL server is in a VirtualBox VM running on Windows 7. Given that the win here is from offloading work from the server to the data nodes, aren’t you maximizing the win by having an incredibly crippled server to begin with? Why not just run that server on metal similar to the data nodes? (That is, why the virtualized environment at all?) Regardless, what’s the hardware specification for the MySQL server? If that’s also a Core 2, it doesn’t have EPT — which will grossly magnify any work that’s offloaded simply by avoiding the hefty tax of pre-EPT virtualization. At the very least, this aspect should be called out with a huge asterisk; you’re making a huge deal about the 70X win, and it’s entirely unclear how much of that is due to the odd (rigged?) nature of the configuration…

Hi Brian,

I don’t have the exact spec of the machines to hand but the data nodes were running on 3 year old desktops that had very modest CPUs even when they were new; the MySQL server was running on a Core i7 machine with fast memory and SSD. In other words the mysqld wasn’t running in too constrained an environment. In any event, the speed up from AQL doesn’t really come from lightening the CPU load on the mysqld, it comes from reducing the messaging between the nodes.

Why this environment? This is the environment I had to hand – 2 identical machines to run the data nodes and a much more powerful machine that I could run a VM within for the mysqld.

The engineering team have run many tests on pure bare-metal environments and have seen far higher speed ups. Mileage definitely does vary.

As always the true test is for people to run their own queries in their own environments and see what results they get (note that the query may need to be tweaked in order to be suitable for pushing down to the data node).

Regards, Andrew.

Thanks for the response; given that a bare-metal environment better approximates the production MySQL servers that I work with, I’m most interested in the “far higher speed ups” you’ve mentioned for these. Was that far higher than 70x?

See above comment – I’ve repeated on bare metal for the mysqld and saw a 230x speedup over Cluster7.1.

Regards, Andrew.

Andrew,

I appreciate the response — but given that this is essentially a cobbled-together experiment, you probably shouldn’t treat it as a benchmark. Right now “70X faster” is appearing everywhere — and that number starts here and with this environment. In my experience, when you have big wins like this, you should just call it that: a big win. How big? Really big — perhaps many multiples of performance. By being more specific but with such a polluted experiment, you only serve to raise doubts where perhaps they are not warranted…

As there was some scepticism over the use of a Virtual Machine in the original tests, I’ve repeated them using bare metal.

I took a slightly different approach this time so that I could compare with Cluster 7.1 as well (I used 7.1.15a) as well as Cluster 7.2.4 (much more recent than the load used for the original test).

So here are the results:

Baseline (7.1.15a) = 35 seconds

7.2.4 (no analyze table, no AQL) = 26.75 sec = 1.3x baseline

7.2.4 (analyze table, no AQL) = 10.1 sec = 3.5x baseline

7.2.4 (analyze table, AQL) = 0.15 sec = 230x baseline!

Note that the reason I’ve included with/without analyze table is that 7.2 made this work for Cluster tables.

In terms of it being an arbitrary test – this is a real customer query that we’ve used as the internal benchmark for years.

I’ll repeat myself in saying that people should (as always) run their own application in their own environment to see what results they get but I believe that these results are still useful in giving people a flavour of what they might see.

For me query over 33.5K records which takes 1.2s is not big deal – rather same query taking almost 90s is big loose. Don’t you think so?

Hi Kompas,

see earlier comment – using bare metal for the mysqld I’m seeing a 230x speedup over Cluster 7.1 – down to 0.15 seconds.

Note that distributed databases aren’t really designed for complex inter-shard joins (this is one reason why NoSQL solutions tend to jettison any ability to do them at all). With Cluster 7.2 we’ve made orders of magnitude improvements to the speed of these joins – taking Cluster from a position where (in many cases) you’d have to avoid use cases that required big, complex joins to one where it’s now very practical.

Regards, Andrew.

MySQL Cluster IaaS best practices for Azure – High Availability, Fault Tolerance, Scalability

Introduction MySQL Cluster, if used correctly, has proven to be one of the most fault tolerant, scalable