Oracle has announced that it now provides support for DRBD with MySQL – this means a single point of support for the entire MySQL/DRBD/Pacemaker/Corosync/Linux stack! As part of this, we’ve released a new white paper which steps you through everything you need to do to configure this High Availability stack. The white paper provides a step-by-step guide to installing, configuring, provisioning and testing the complete MySQL and DRBD stack, including:

- MySQL Database

- DRBD kernel module and userland utilities

- Pacemaker and Corosync cluster messaging and management processes

- Oracle Linux operating system

DRBD is an extremely popular way of adding a layer of High Availability to a MySQL deployment – especially when the 99.999% availability levels delivered by MySQL Cluster isn’t needed. It can be implemented without the shared storage required for typical clustering solutions (not required by MySQL Cluster either) and so it can be a very cost effective solution for Linux environments.

Introduction to MySQL on DRBD/Pacemaker/Corosync/Oracle Linux

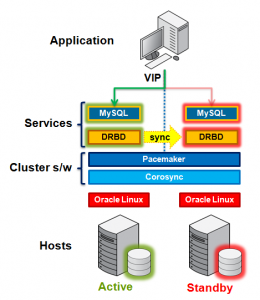

Figure 1 illustrates the stack that can be used to deliver a level of High Availability for the MySQL service.At the lowest level, 2 hosts are required in order to provide physical redundancy; if using a virtual environment, those 2 hosts should be on different physical machines. It is an important feature that no shared storage is required. At any point in time, the services will be active on one host and in standby mode on the other.

Pacemaker and Corosync combine to provide the clustering layer that sits between the services and the underlying hosts and operating systems. Pacemaker is responsible for starting and stopping services – ensuring that they’re running on exactly one host, delivering high availability and avoiding data corruption. Corosync provides the underlying messaging infrastructure between the nodes that enables Pacemaker to do its job; it also handles the nodes membership within the cluster and informs Pacemaker of any changes.

The core Pacemaker process does not have built in knowledge of the specific services to be managed; instead agents are used which provide a wrapper for the service-specific actions. For example, in this solution we use agents for Virtual IP Addresses, MySQL and DRBD – these are all existing agents and come packaged with Pacemaker. This white paper will demonstrate how to configure Pacemaker to use these agents to provide a High Availability stack for MySQL.

The essential services managed by Pacemaker in this configuration are DRBD, MySQL and the Virtual IP Address that applications use to connect to the active MySQL service.

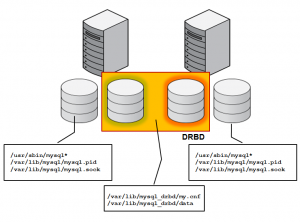

DRBD synchronizes data at the block device (typically a spinning or solid state disk) – transparent to the application, database and even the file system. DRBD requires the use of a journaling file system such as ext3 or ext4. For this solution it acts in an active-standby mode – this means that at any point in time the directories being managed by DRBD are accessible for reads and writes on exactly one of the two hosts and inaccessible (even for reads) on the other. Any changes made on the active host are synchronously replicated to the standby host by DRBD.

Setting up MySQL with DRBD/Pacemaker/Corosync/Oracle Linux

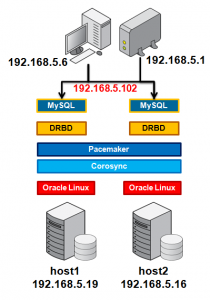

Figure 2 shows the network configuration used in this paper – note that for simplicity a single network connection is used but for maximum availability in a production environment you should consider redundant network connections.A single Virtual IP (VIP) is shown in the figure (192.168.5.102) and this is the address that the application will connect to when accessing the MySQL database. Pacemaker will be responsible for migrating this between the 2 physical IP addresses.

One of the final steps in configuring Pacemaker is to add network connectivity monitoring in order to attempt to have an isolated host stop its MySQL service to avoid a “split-brain” scenario. This is achieved by having each host ping an external (not one part of the cluster) IP addresses – in this case the network router (192.168.5.1).

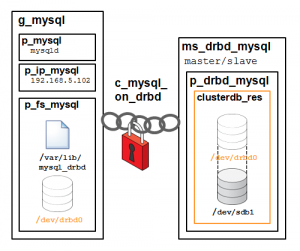

The white paper steps through setting all of this up as well as the resources in Pacemaker/Corosync that allow detection of a problem and the failover of the storage (DRBD), database (MySQL) and the Virtual IP address used by the application to access the database – all in a coordinated way of course. As you’ll notice in Figure 4 this involves setting up quite a few entities and relationships – the paper goes through each one.

Is Oracle seriously suggesting people not configure fencing?

I know turning it off simplifies the whitepaper, since there are so many devices out there, but it should at least be mentioned that it needs to be configured before putting the cluster into production.

Btw. you don’t need the ais_* env variables. They were only used for the sed scripts which updated corosync.conf 😉

Thanks for the tip on the obsolete commands in the paper; updated version has been submitted for publishing.

Rather than STONITH, the paper describes an alternate approach whereby the node shoots itself if it becomes isolated (when pings to reference IP addresses fail.